Visual Technology Tutorial, Part 7: Color Processing

This is Part 7 of a video processing technology training series extracted from RGB Spectrum's Design Guide.

Color Perception

Seeing color is a perceptual experience that happens when light is received by the human retina. The retina is sensitive to light primarily in the region of 400 to 700 nanometers in wavelength. There are three types of color-sensitive photoreceptive “cone” cells in the retina which each respond to different sub-bands within the 400 to 700 nm range. These correspond roughly to blue (peaking around 445 nm), green (peaking around 535 nm), and red (peaking around 575 nm). For this reason, red, green, and blue are considered the primary colors for light perception and video processing/display applications.

Color Models

A virtually infinite range of colors can be defined using the parameters of one or more color models. The most common and well-known color model is RGB, in which colors are broken down into various combinations of red, green, and blue. RGB is the color model for most computer monitors and video systems that use 24-bit color depth, which means that each pixel of each of the three colors is comprised of eight bits of data. This data is a numerical representation of the “brightness” of each color. Because there are 28 or 256 possible values for 8 bits, 24-bit color depth provides 256 discrete brightness levels for each color (R, G, or B), resulting in 16.7 million (256 x 256 x 256) color variations.

Although the RGB model is the most common, there are several other color models as well.

- YUV is a color model that takes into account human visual perception and reduces the bandwidth for chrominance (or color) components. The Y in YUV refers to “luma” (brightness, or lightness). U and V provide color information and are “color difference” signals of blue minus luma (B-Y) and red minus luma (R-Y).

- The YPbPr color model is used in analog component video and its digital version, YCbCr, is used in digital video. Both are more or less derived from YUV, and are sometimes called Y’ (Y “prime”) U V, where Y’ represents the gammacorrected luminance signal.

- HSV (hue, saturation, value) — also known as HSB (hue, saturation, brightness) — is often used by artists because they find it more intuitive to think about a color in terms of hue and saturation rather than additive or subtractive color components. HSV is a transformation of an RGB color space, but its components and colorimetry are similar to the RGB color space from which it is derived.

- HSL (hue, saturation, lightness/luminance) – also known as HLS – is quite similar to HSV, with “lightness” replacing “brightness.” The difference is that the brightness of a pure color is equal to the brightness of white, while the lightness of a pure color is equal to the lightness of a medium gray.

Color Spaces

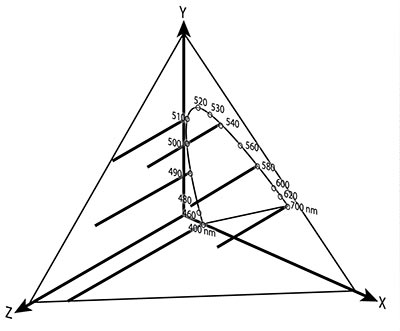

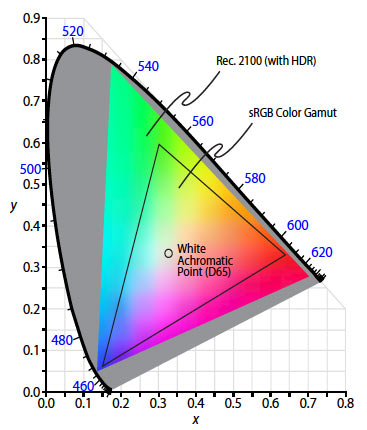

In 1931, the Commission International de L’Eclairage (CIE) developed a method for systematically measuring color in relation to the wavelengths they contain. This system became known as the CIE color model. The X and Y coordinates represent hue and saturation, while Z is the wavelength. Z coordinates are projected onto the XY plane, producing a parabolic curve that defines the color space. The perimeter edge of the parabola identifies wavelengths of visible light in nanometers. The CIE model allows you to specify ranges of colors, also known as a “gamut,” that can be produced by a particular light source. All of the possible colors that a light source can produce fall within a triangle defined by the red, green, and blue color limits.

Color wavelengths (Z) are projected onto the XY plane to generate the Color Space Chromaticity Diagram of visible colors.

Color space conversion is the translation of the representation of a color from one basis to another, i.e. from RGB to YUV or vice versa. This can be important when a transmitting device (or source) defines color using one color model, and the receiving device (or display) uses a different color model to define colors. If the source device has a larger color gamut than the display device, some of those colors will be outside of the display’s color space. These out-of-gamut colors are called a “gamut mismatch.” Color space conversion corrects for gamut mismatch and allows the display to show an image that is as close as possible to the original image supplied by the source.

Industry Problem: Mismatches between source and display color spaces can result in images turning pink or other color imbalances.

RGB Spectrum Solution: RGB Spectrum’s products offer convenient color space conversion to correct for source display mismatches to ensure that colors are displayed accurately.

The CIE 1931 Color Space Chromaticity Diagram displays all possible color combinations.

Why Does Color Processing Matter?

Human vision perceives changes in brightness (luminance or “Y” value) much more so than variations in color values. For this reason, some color information can be removed from signals to reduce the bandwidth needed to process and transmit graphics or video signals, while still maintaining the visible integrity of the color. This is usually accomplished by “subsampling” color values, and then using surrounding values to recreate the missing color values.

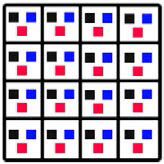

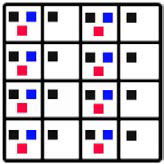

Color processing within the video processing space is often described in terms of color subsampling ratios: 4:4:4, 4:2:2, 4:1:1, 4:2:0, etc. The first number refers to the number of luminance values provided in a 4x4 matrix of pixels, the second number refers to the frequency with which the chrominance values (blue and red) are sampled horizontally, and the third number refers to the frequency with which the change in chrominance values are sampled vertically.

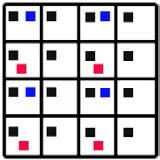

When every color element of every pixel is preserved, no color subsampling occurs resulting in a full bandwidth signal or 4:4:4. In this 4x4 matrix, every pixel contains information about luminance (black), Cb (blue) and Cr (red).

Graphics and video signals with 4:4:4 color deliver the most vivid and detailed imagery, but they require a lot more bandwidth to process and transmit. 4K signal processors (like our MediaWall® V, QuadView® UHD and SuperView® 4K processors) are designed to support and maintain this high level of color saturation. In addition, our MediaWall and Galileo processors and QuadView/SuperView multiviewers all process 4:4:4 signals without color subsampling. For optimal compression and transmission of signals, our DGy™ JPEG2000 codecs use 4:4:4 processing to deliver lossless imagery for the most demanding applications.

The diagram above depicts 4:2:2 color. Here, all of the luminance values are transmitted but only half of the color values. Although some color information must be recreated, 4:2:2 color is vivid, and imagery remains visibly sharp and clear.

To further reduce bandwidth, some processors subsample color at 4:1:1 (once every 4 pixels horizontally). However, this often results in bland color and image artifacts. For these reasons, this algorithm is only used in a limited range of applications.

Another subsampling algorithm, which uses a similar bandwidth as 4:1:1 while delivering much better color resolution, is 4:2:0 (often used when processing and transmitting MPEG signals). This is the color sampling ratio used by our Zio® and DSx™ H.264 codecs. In such processing, color values are subsampled by half both horizontally and vertically. A typical 4:2:0 color subsample is shown at left. The distribution of color values allows a processor to recreate the original color more effectively than 4:1:1.

Color processing, conversion and transmission can be a challenge for video processors, but many are designed to deliver excellent performance on this front. If vivid color and sharp imagery is important to your application, be sure to look for a processor that is designed to process and transmit color resolutions effectively.

RGB Spectrum is a leading designer and manufacturer of mission-critical, real-time audio-visual solutions for a civilian, government, and military client base. The company offers integrated hardware, software, and control systems to satisfy the most demanding requirements. Since 1987, RGB Spectrum has been dedicated to helping its customers achieve Better Decisions. Faster.™